I’ve missed you, my lovelies! I dropped off the grid for awhile in hopes of making progress on my book. I wrote some words. I also saw some beautiful things, and visited beloved friends and family. My husband and I celebrated the 25th anniversary of our first date. I made fairy houses for my seven-year-old niece, and my four-year-old nephew told me I looked pretty.

I’ve missed you, my lovelies! I dropped off the grid for awhile in hopes of making progress on my book. I wrote some words. I also saw some beautiful things, and visited beloved friends and family. My husband and I celebrated the 25th anniversary of our first date. I made fairy houses for my seven-year-old niece, and my four-year-old nephew told me I looked pretty.

I took deep, freeing breaths.

Yet, it hasn’t been enough. I thought that if I deleted Facebook and Twitter from my phone, put my email on automatic reply, and took a break from obligations, that I would add thousands of words to my manuscript with ease. Nnnnnyeah–that didn’t happen. For the past few weeks, my inner dialogue has gone like this:

Me: Ok! No Facebook. No Twitter. Make the words!

Myself: I can’t.

Me: Where are the words.

Myself: Stop bugging me.

Me: I arranged everything so you could just concentrate and word.

Myself: Back off. Seriously.

Me: WORD.

Myself: PISS OFF.

Then I read Theodora Goss’s post about her burnout: “[S]ometimes I was angry about how much I was expected to do, how much people assumed I could take on. . . . Burnout is when you’re stressed for so long, that eventually you just have no reserves left.”

Burnout? My life is no longer the oil pipeline fire it was a few years ago. I researched burnout back then, and even drafted a blog post about it last year. I wrote, “Burnout is being done . . . with the effort of moving forward, of staying positive, of staying engaged. The problem is having to go to the well one more time and finding it dry.”

So yeah, I’ve been struggling with burnout for awhile. I recognized it over a year ago, and I started trimming activities and obligations. I tried to cut a bit here, get more organized and focused there, assuming that it would be enough to make room for this book and my life and everything would be fine.

And it is better. My stress level is down, to the point where I can take those deep, freeing breaths. But I’m still arguing with myself about making the words. I’m still spending too much time freaking out that things are not going according to my plan. I’m still resentful of even small disruptions, like the noise the cleaners are making in the next room as I type this. I guess I’m still feeling burned out.

My knee jerk reaction to that realization is MOAR RULZ = MOAR WORDS. Ignore the news even more, ignore all of you even more, cut every single thing that is not absolutely essential. Just art harder.

Except . . . that approach is how I got here. When my Mom died and my husband had a stroke (I hate you, 2015), my existence narrowed down to what was necessary for our physical and financial survival. “Me time” was the label I slapped on fulfilling emotional obligations to others. I evaluated every activity and every choice as a transaction. Because my ability to function physically and cognitively is limited and unpredictable, I do something today and can only cross my fingers and hope I’ll be able to do something tomorrow as well. There is enormous pressure to get my energy’s worth, so to speak.

In a blog post with the delightful title Knitting At The End Of The World, Austin Kleon writes that while Nero didn’t literally fiddle while Rome burned, “there’s the other meaning of the word fiddle: to fidget or pass time aimlessly, without really achieving anything. And yet, fiddling, in this sense, is so much a part of how artists arrive at their work: they fiddle around, they putter, they waste time.”

Seeing everything as a transaction, cutting out everything that is not essential to survival, wasting no time on fiddling–this is not how one recovers from burnout. Theodora Goss says that she’s been recovering from her burnout by taking “the Marie Kondo principle of what to keep and what to discard–does it spark joy?–and apply it to my life.” In the last four years, the only impractical thing I’ve done simply for the joy of it is learning the cello, and even then I’ve done it in my usual structured way.

Last week, though, I sat in the car and knit while my husband wandered a Civil War battlefield. I watched the trees, and took one of those deep, freeing breaths. In that moment, I remembered that my feet are on the ground, my lungs are breathing air, and I’m ok. And while I sat in the car, knitting and watching the trees, I thought of you. When I took those deep, freeing breaths, I breathed out the beginning of these words you’re reading now. I need more of those kinds of moments, so I can write.

There is no way to eliminate obligations. I can’t delegate responsibility for our physical and financial health. I also can’t push myself to the next deadline (and the next and the next) in an endless chain of necessary transactions. I can’t buckle down and overcome my burnout with organization and determination anymore than I can cure myself of ME through force of will.

What I learned this summer is that the equation is not more rules = more words. The equation is fiddling + breathing + time + love = more (and better) words.

My feet are on the ground. My lungs are breathing air. I miss you, but I made you some words.

NIH Funding for ME in 2019: The Details

There is still value in the detailed number crunching, though. One kind advocate said that she trusts my numbers more than she trusts NIH’s reporting (thank you!). Let’s dive in! (Note that I updated this post on October 28, 2020 with corrected numbers.)

2019 Actual Numbers

Based on currently available numbers, NIH spent $12,008,817 on investigator-initiated grants and the Collaborative Research Centers in FY2019. More than 60% of that funding went to the Centers. I address the problem with intramural funding in more detail in this post.

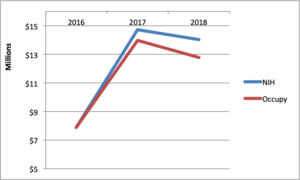

Unfortunately, NIH won’t release its numbers for intramural funding until next spring (and those numbers are not always accurate). I will update this post when those numbers are released, but for now we have to rely on the information that is publicly available.Here is how 2019 compared to 2018. (You can read the details of 2018 here).**

A 6% decrease in the bottom line total doesn’t sound too bad.The 3% increase in the bottom line is due entirely to the increase in Research Center funding. It’s not until you look at the trend over time, particularly in each category of spending, that you see the dangerous drop in investigator-initiated (extramural) funding since 2017. More on that below.Of the twelve extramural grants in 2019, seven continued from last year: Davis, Friedberg, Light, Unutmaz, Williams, Nacul, and Rayhan. There were five new grants: Abdullah, Daugherty, Li, Natelson, and Younger, but only Younger’s was a five year grant.

The Research Centers are the same from last year: Columbia, Cornell, and Jackson Labs. Data Management Center: RTI. One note about Columbia’s Center: NIH gave the Center an administrative supplement award. However, Dr. Joe Breen of NIAID clarified that this award funded research on a different disease using methods from the ME work. I have excluded the supplement funding from my calculations.

Once again, NIAID and NINDS provided the vast majority of funding (78%) across all categories. Eight additional Institutes contributed the remaining 22%, almost all of which went to the Research Centers. NIAID split its funding almost evenly between grants and Centers, with 52% going to investigator-initiated grants. NINDS spent 65% of its funding on the Research Centers, and the remainder on investigator-initiated grants.

Three grants are now in their last year of funding (Friedberg, Unutmaz, and Williams). These are all large five-year grants, totaling more than $1.5 million in FY2019 alone. If these grants are not renewed or replaced, investigator-initiated funding will drop by 34% next year.

Which institutions and investigators are getting the most money? These seven investigators received 82.5% of the total FY2019 funding:

Further Observations

As discussed above, the overall funding increased 3% from FY 2018

declined 6% from FY 2018. However, if we look back to 2017, it’s obvious that we are well below the high watermark of NIH funding to date.Since 2017, our total funding has declined by 6%

14%, while investigator-initiated funding declined 25%. I first raised a concern about the drop in investigator-initiated funding in 2017. I am now so alarmed by the implications of this that I wrote an entire post about it.What can reverse the trend? NIH must issue more Requests for Applications with set aside funding. I suspect that there are a number of investigators who would submit applications if they knew some were guaranteed to get funding.

My expectation is that NIH funding should grow substantially every single year. That is not happening, but it could. The only thing preventing NIH from setting aside funding for RFAs is NIH itself.

Meanwhile, time passes.

At the NIH ME/CFS Advocacy Call on October 17, 2019, Dr. Whittemore said the Trans-NIH ME/CFS Working Group was working on a strategic plan, with the NANDS report as a starting point. No timeline was provided.

**Note that NIH calculates the aggregate number differently than I do, because I do my best to exclude amounts that were not actually spent on ME/CFS research, as in my 2018 Fact Check post.

My thanks to Dr. Joe Breen at NIAID for providing me additional clarifying information.