On September 9, 2016, Queen Mary University of London released data from the PACE trial in compliance with a First Tier Tribunal decision on a Freedom of Information Request by ME patient Alem Matthees. The day before, the PACE authors had released (without fanfare) their own reanalysis of data using their original protocol methods. Today, Matthees and four colleagues published their analysis of the recovery data obtained from QMUL on Dr. Vincent Racaniello’s Virology Blog. These two sets of data reanalysis blow the lid off the PACE trial claims.

On September 9, 2016, Queen Mary University of London released data from the PACE trial in compliance with a First Tier Tribunal decision on a Freedom of Information Request by ME patient Alem Matthees. The day before, the PACE authors had released (without fanfare) their own reanalysis of data using their original protocol methods. Today, Matthees and four colleagues published their analysis of the recovery data obtained from QMUL on Dr. Vincent Racaniello’s Virology Blog. These two sets of data reanalysis blow the lid off the PACE trial claims.

The bottom line? The PACE trial authors’ claims that CBT and GET are effective treatments for ME/CFS were grossly exaggerated.

Improvers

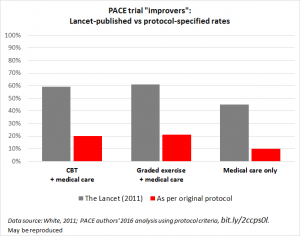

First, take a look at what the PACE authors’ own reanalysis showed. When they calculated improvement rates using their original protocol, the rates of improvement dropped dramatically.

As shown in the above graph by Simon McGrath, the Lancet paper claimed that 60% of patients receiving CBT or GET improved. But the reanalysis using the original protocol showed that only 20% of those patients improved, compared to 10% who received neither therapy. In other words, half of the people who benefited from CBT or GET would likely have improved anyway. Remember, the PACE authors made changes to the protocol after they began collecting data in this unblinded trial. Those changes, used in the Lancet paper, inflated the reported improvement by three-fold.

As shown in the above graph by Simon McGrath, the Lancet paper claimed that 60% of patients receiving CBT or GET improved. But the reanalysis using the original protocol showed that only 20% of those patients improved, compared to 10% who received neither therapy. In other words, half of the people who benefited from CBT or GET would likely have improved anyway. Remember, the PACE authors made changes to the protocol after they began collecting data in this unblinded trial. Those changes, used in the Lancet paper, inflated the reported improvement by three-fold.

One would think that the PACE authors would be at least slightly embarrassed by this, but instead they continue to insist:

All three of these outcomes are very similar to those reported in the main PACE results paper (White et al., 2011); physical functioning and fatigue improved significantly more with CBT and GET when compared to APT [pacing] and SMC [standard medical care].

Sure, twice as many people improved with CBT and GET compared to standard medical care. But 80% of the trial participants DID NOT IMPROVE. How can a treatment that fails with 80% of the participants be considered a success?

Not only that, but the changes in the protocol were like a magic wand, creating the impression of huge gains in function: 60% improved! The true results, however, are close to a failure of the treatment trial.

Recovery

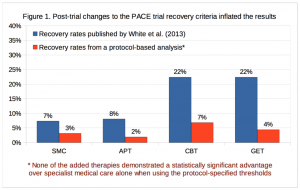

Today’s publication on Dr. Racaniello’s blog presents the analysis of the recovery outcome data obtained by Alem Matthees. Once again, the mid-stream changes to the study protocol grossly inflated the PACE results.

As the graph from the Matthees paper shows, the PACE authors claimed more than 20% of subjects recovered with CBT and GET. Using the original protocol, however, those recovery rates drop by more than three-fold. Furthermore, there is no statistically significant difference between those who received CBT or GET and those who received standard care or pacing instruction. In other words, the differences among the groups could have easily been the result of chance rather than the result of the therapy delivered.

Matthees, et al. conclude, “It is clear from these results that the changes made to the protocol were not minor or insignificant, as they have produced major differences that warrant further consideration.” In contrast, long time CBT advocate Dr. Simon Wessley told Julie Rehmeyer that his view of the overall reanalysis was, “OK folks, nothing to see here, move along please.”

Taken together, the reanalysis of data on improvement and recovery show that the changes in the protocol resulted in grossly inflated rates of improvement and recovery. Let me state that again, for clarity: the PACE authors changed their definitions of improvement and recovery and then published the resulting four-fold higher rates of improvement and recovery without ever reporting or acknowledging the results under original protocol, until now. Furthermore, the PACE authors resisted all efforts to obtain the data by outside individuals, spending £250,000 to oppose Matthee’s request alone.

Conclusions

Tuller’s detailed examination of the PACE trial and these new data analyses raise a number of questions about why these changes were made to the protocol:

- Were the PACE authors influenced by their relationships with insurance companies?

- Did they make the protocol changes after realizing that the FINE trial had basically failed using its original protocol?

- Why did they change their methods in the middle of the trial? (Matthees, et al. note that changing study endpoints is rarely acceptable)

- Were they influenced by the fact that the National Health Service expressed support for their treatments before the trial was even completed?

- Since data collection was well underway when the changes were made, and because PACE was an unblinded trial, we have to ask if the PACE authors had an idea of the outcome trends when they decided to make the changes?

- Was their cognitive bias so great that it interfered with decisions about the protocol?

- Did the PACE authors analyze the data using the original protocol at any point? If so, when? How long did they withhold that analysis?

The grossly exaggerated results of the PACE trial were accepted without question by agencies such as the Centers for Disease Control and institutions such as the Mayo Clinic. The Lancet and other journals persist in justifying their editorial processes that approved publication of these grossly exaggerated results.

The voices of patients have been almost unilaterally ignored and actively dismissed by the PACE authors and by journals. We knew the PACE results were too good to be true. A number of patients worked to uncover the problems and bring them to the attention of scientists. Their efforts went on for years, and finally gained traction with a broader audience after Tuller and Racaniello put PACE under the microscope.

For five years, the claim that CBT and GET are effective therapies for ME/CFS has been trumpeted in the media and in scientific circles. Medical education has been based on that claim. Policy decisions at CDC and other agencies have been based on that claim. Popular views of this disease and those who suffer with it have been shaped by that claim.

But this claim evaporates when the PACE authors’ original protocol is used. Eighty percent of trial participants did not improve. Not only that, but we do not have any data on how many people in that group of 80% were harmed or got worse. CBT and GET may not be neutral therapies worth trying in case you fall in that lucky 20% who improved spontaneously or due to the treatment. We don’t know how many people got worse with these therapies, so we cannot assess the risks.

The end result is this: the PACE authors made changes to their protocol after data collection had begun, and published the inflated results. But when the original protocol is applied to the data, CBT and GET did not help the vast majority of participants. The PACE trial is unreliable and should not be used to justify the prescription of CBT and GET for ME patients.

As Matthees, et al., stated in their paper:

The PACE trial provides a good example of the problems that can occur when investigators are allowed to substantially deviate from the trial protocol without adequate justification or scrutiny. We therefore propose that a thorough, transparent, and independent re-analysis be conducted to provide greater clarity about the PACE trial results. Pending a comprehensive review or audit of trial data, it seems prudent that the published trial results should be treated as potentially unsound, as well as the medical texts, review articles, and public policies based on those results.

Great article, Jennie. I’m wondering if anyone has made a graph that shows all three version: original PACE published, PACE recently revised, and then the analysis by Alem Matthees’ team. That visual would well make the points you make so well with words.

I hope someone shoves this down Mayo’s throat.

Excuse me if I sound vicious and angry: it’s been a lot of years of cr*p, and all the paper ways is ‘potentially’ unsound? At least some of the scientists have taken very strong positions about it being BAD SCIENCE with an AGENDA.

I’m disgusted at medical research in general – c’mon, folks, it’s been over 30 years – but the continued tentativeness of papers like this – which look as if they say something, and get weaselly in the conclusion so as not to offend someone or some institution is just another kick in the stomach.

I’m a writer. I understand NUANCE. This one FAILS – from that one comment. Because now ‘journalists’ and ‘researchers’ will be able to quote that conclusion as ‘being in the original paper.’

Thanks for the report. Keep praying.

With respect, I think that is a very unfair and unrealistic comment.

Understatement and letting the basic numbers speak for themselves can be very powerful in the right context.

How far did being blunt and demanding get patients over the last 30 years? We all know the brutal answer to that.

If you are unhappy with the re-analysis, feel free to write your own. The FOI dataset used by Matthees et al is freely available online.

Skeet, if I understand Alicia’s comment, she was not disagreeing with the reanalysis. She disagreed with the statement of conclusion, and wanted it to be bolder. To your point, in the context of Matthees, et al., their conclusion is appropriately stated for a scientific paper. Alicia, even in writing my blog post, I dialed back my comments to be as fair and evenhanded as possible. Believe me, my personal opinions are much much stronger.

Have the authors of the above mentioned study released the dataset that went along with their analysis, or is QMUL supposed to do that on their website or something like that? Show me the data!

There’s a link to the dataset from this tweet https://twitter.com/CoyneoftheRealm/status/778643034800005120?ref_src=twsrc%5Etfw

Stanford Researchers Seek People Living with Chronic Pain for Survey

http://nationalpainreport.com/stanford-researchers-seek-people-living-with-chronic-pain-for-survey-8831499.html

Very nice piece. Thank you, Jennie!

Thank you again Jennie for a very informative and easy to understand article, you Rock! Thanks so much for being the BEST advocate for our community. SO much goes on behind the scenes that the general public would never know about without you.

Your tenacity has paid off tremendously, I hope those that were involved in the pace trial are chastised by their peers, their patients, and all news media to the point that a lot of heads will roll ?

You wrote: “we do not have any data on how many people in that group of 80% were harmed or got worse.”

right!!!! many of us with ME get permanently disabled by pushing our bodies.

thank you for this excellent blog post.

rivka

Pingback: PACE trial results were grossly exaggerated | WAMES (Working for ME in Wales)

Golly, aren’t we grateful that NIH is protecting us from bad science?

Oh, wait, the evidence review they commissioned for P2P actually thought PACE was the bees’ knees!

Does it ever feel like the NIH is *protecting* us by sitting on us?

Excellent job of explaining what Matthees, et al., exposed about the PACE trial and publication and also of showing what the differences were in results with the PACE authors’ original criteria and then the changed criteria.

Vincent Racianello has a good article at the Virology blog. And Julie Rehmeyer, science writer with ME/CFS wrote a good essay at STAT. She went to a national conference of statisticians during the summer and exposed the PACE trial, using slides in her presentation, showing the fallacies in the trial.

And David Tuller deserves a ton of credit and thanks for his investigation and paper on this.

Now what happens? I just feel sick thinking of the CDC and Mayo Clinic, as well as the British Health Services basing their view of this disease and treatment on the PACE study. How many people have been denied health care and disability benefits by governments and insurance companies? How many people have suffered from insensitive doctors and government and insurance officials? How many people have gotten sicker from that exercise regimen?

When will this reality be reflected in government, medical and insurance entities?

I think everyone denied care, benefits and compassion because of this horrific study, with the concomitant insistence by Lancet that it was correct and the refusal to fund scientific research here and in Britain, should be granted financial damages.

I think it’s going to take more pushing and advocacy to get this study taken seriously by the powers that be and for changes to be made.

Thank you, Jennie, for your analysis, commitment and sheer hard work.

This is surrealistic and yet, it really happened. Thank you so much for explaining this so clearly. The more I read about the scandal that PACE is, the less words I have.

I have seen little yet of the debunking of PACE in mainstream media; how can this be rectified and are they still under the spell of the Science Media Centre ?

Thank you for summarising this so well. Generally agree with the other comments – that it’s great the truth is finally coming out, but the truth now needs to find its way into medical journals, doctors’ surgeries and mainstream media. I absolutely love the ‘Emperor’s New Clothes’ illustration at the top of this post – the whole story in a nutshell. So glad to have discovered your blog.

Thank you, and welcome!

The cartoon is great.

I agree that it will take a strong campaign to get the major media to cover the debunking of the PACE trial, being followed by NIH, the Mayo Clinic and British Health System.

More people denied care, disability and being mistreated.

I really didn’t expect any grant funding. It’s going to take more publicity, more pushing, more advocacy.